- Blog post • 13 min read

Agent Vault: The Open Source Credential Proxy and Vault for Agents

- Published on

For a while, the industry has been grappling with one big question: How do we give agents secure access to services without them reading any secrets? Today, we provide an early answer: Agent Vault, an open source, HTTP credential proxy and vault.

At Infisical, we process billions of secrets per month that are sending application configuration and secrets data to teams, CI/CD pipelines, and servers/applications that need them. We’ve seen it all from identity-based, centralized secrets management to automated secrets rotation and dynamic secrets schemes for minting secrets on the fly. So when we first thought about how agents might interact with secrets, we thought that traditional secrets management techniques would suffice. We couldn’t be more wrong.

This is a look at how we're thinking about the problem, and why we think Agent Vault cuts to the right form factor for secrets management in the AI era. Hopefully, this makes for an interesting read and is useful for others who may find themselves designing secrets management solutions for their own agents; you might even consider taking Agent Vault for a spin.

The Challenge

Today’s computing paradigms weren’t designed for agents but for traditional workloads. This issue here is that agents, be it coding agents or sandboxed execution agents, operate in ways that are fundamentally different from anything we’ve seen before; they are non-deterministic and easy to manipulate, which breaks many of the assumptions traditional systems rely on.

Take, for instance, secrets management, where the practice for the last decade has been to deliver secrets directly to workloads from a centralized vault; this works well for programs with fixed execution paths, but falls apart when the program can be induced to use those secrets in ways its author never anticipated.

Consider this attack vector: A malicious actor prompt injects an agent, telling it to sweep and return the secrets available in the agent’s environment; the agent obeys and exposes the secrets back to the caller. Whether it’s poisoned documents in a RAG pipeline or a malicious webpage pulled in by a tool call instructing the agent to forward secrets to the attacker’s controlled endpoint, there are many ways that the agent can be prompt injected, making it a highly vulnerable surface. Unfortunately, even with sufficient guardrails in place, there is no way to guarantee that the agent won’t be manipulated into leaking its secrets.

This is the problem of credential exfiltration and, if that happens, the attacker has the necessary credentials to perform data exfiltration, an adjacent problem where they can now use the exfiltrated credentials to gain access to valuable data. As you might expect, an attacker who successfully manipulates an agent with access to emailing capabilities can exfiltrate credentials needed to read and send emails on behalf of users—terrifying.

Early Solutions

Most teams we’ve talked to have implemented basic guardrails, security controls, and more traditional workarounds to mitigate credential exfiltration risk. An example of such a measure is to give agents short-lived credentials wherever possible like if an access/refresh token pattern is feasible for an API that supports OAuth2; this ephemeral quality can also be achieved through automated secrets rotation and dynamic secrets schemes.

In this case, if an attacker were to get hold of an exposed credential then they’d only be able to use it for a limited time period. This is suboptimal, however, because these measures don’t change the fact that credentials can still be exfiltrated.

The best teams develop their own custom plumbing to combat credential exfiltration. For example, Anthropic’s Managed Agents architecture blog describes building a dedicated proxy service for agents that fetches credentials from a vault and attaches them onto outbound requests going through it; the blog mentions that the harness is never made aware of the credentials.

Browser Use, an infrastructure company that offers web browsers for AI agents, uses a sandboxed agent to control plane architecture that routes agent requests through a dedicated service that injects credentials at this proxy layer. In recent days, we’ve also seen cloud providers launch their own provider-specific solutions such as Vercel’s credential brokering or Cloudflare’s outbound workers that only work within their specific ecosystem.

This is a strong signal that the old model for delivering secrets to traditional workloads does not work for agents. It is also a strong signal that this is not something that every team should be solving on their own.

The Solution

When you examine how the best teams build and use secure agents, you’ll notice that they draw a trust boundary between agents and credentials. After all, if the agent cannot be trusted with safely handling credentials, then why give it any credentials in the first place? It might just be more logical to broker credentials at a proxy layer sitting between the agent and the service that it’s trying to reach. This is becoming the primary approach that we, as an industry, are currently converging towards: credential brokering.

In short, the agent should not be trusted with direct access to any secrets. Instead, something else should hold the secret on behalf of the agent and attach it at the edge when the agent sends a request through it.

Agent Vault

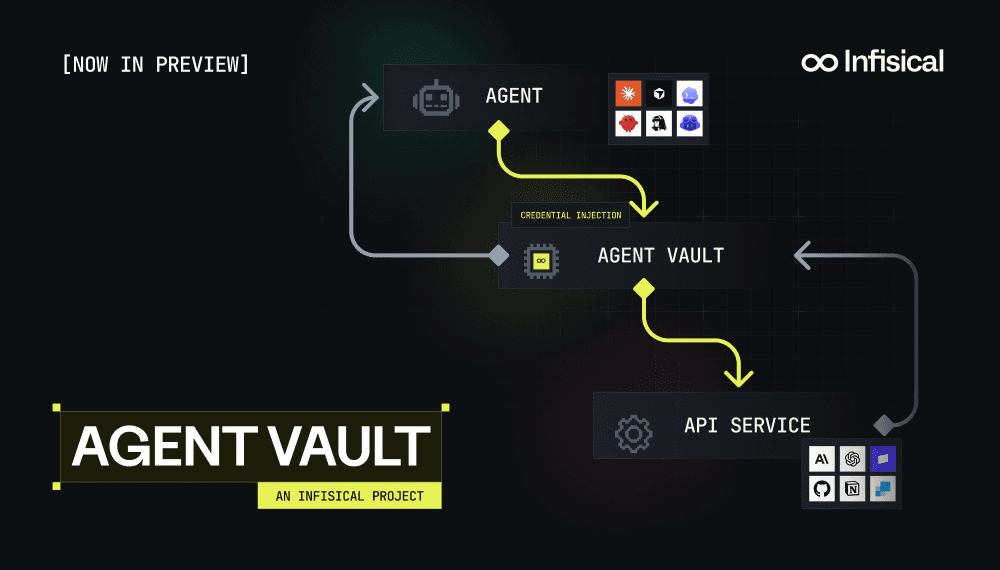

Today, we are announcing Agent Vault, an open source project in research preview with a simple idea: agents should never see the underlying secret in the first place.

With Agent Vault, we’re rethinking how secrets should be consumed by agents and going agent first in a way that is cognizant of humans. We believe that vaults and/or secret stores are here to stay but the way in which secrets are delivered to fit the ergonomics of how agents operate will change drastically.

Agents, at least in their current state, cannot be trusted with holding secrets directly and so there has to be a dedicated forward HTTP proxy beside each agent, be it through a dedicated service, sidecar, or egress layer; to securely broker credentials for it to access the external world. With this proxy in place, you can inspect proxied requests and, in the future, apply firewall rules to apply restrictions to traffic flowing through the proxy.

The Agent Vault project is an early peek into a trend that we believe many folks including Anthropic, Brex, and a few open source projects have caught onto which is the separation of the agent from its credentials.

While we anticipate the form factor of Agent Vault to change in the following year and have significant work ahead to make it easier to set up for agentic environments, we believe that it represents a fundamental step forward in how to correctly think about secrets management for AI agents and specifically the ergonomics for how to design for them.

How it Works

Agent Vault ships as a portable binary that acts as both a server and CLI client; it’s a dedicated service that can also be deployed as a Docker container. You can use Agent Vault locally alongside a coding agent like Claude Code or deploy it to your VPS to run alongside your custom agent infrastructure.

If you’re running sandboxed agents, we’d recommend that you deploy an instance of Agent Vault within close proximity to your agents and provision agents access to specific vaults within (more on that next).

Within Agent Vault, we introduce four constructs: vaults, credentials, services, and agents.

- A vault is a secure logical container for credentials with services that define how agents can proxy requests through it.

- A credential is a sensitive value stored in a vault that Agent Vault attaches to proxied requests. This can be an API key, database credential, password, or any other sensitive material.

- A service is a host such as

api.stripe.comthat an agent can reach through the proxy vault. When adding a service to a vault, you specify the hostname for that service and the authentication scheme for it (e.g. Bearer, API Key, etc.); you can specify a vault credential to be used as part of the service authentication or omit and treat the proxy as a passthrough. When an agent makes a proxied request, Agent Vault matches the target host against the vault’s services, attaches the configured credentials, and forwards the request. - An agent represents an AI agent like Claude Code, OpenClaw, Hermes, or a custom agent that gets its own credential; this construct is not always needed if you intend to delegate vault access to an agentic system through a service token issued by your user.

In order to use Agent Vault, you first create a vault, add credentials into it, and specify which services can be accessed through the vault and how authentication should work for them.

From there, you create an agent with an agent token, configure your sandboxed agent’s environment to trust the CA certificate of Agent Vault and set the HTTPS_PROXY environment variable to point to the vault; this takes the form HTTPS_PROXY=<agent_credential>:vault@agent-vault_url.com. Together, this instructs HTTP clients, CLIs, and runtimes across most languages to reroute traffic to the proxy and configures trust at the TLS layer so the agent accepts the vault as a valid upstream. Finally, you cut off all outbound traffic access at the network layer so that only the Agent Vault instance is accessible from the sandbox or contained environment.

The end result is a flow like this:

- The agent invokes a CLI command, SDK method, MCP tool, or makes an API call to an LLM or API service like

api.anthropic.com. - The request is automatically routed through Agent Vault without the agent knowing anything.

- Agent Vault intercepts the request, attaches the credential, applies any predefined rules, and forwards the request to the destination.

- Voila. The request comes back to the agent which completes its work. It never sees the underlying secret and/or has to modify its workflow.

While the preliminary design of Agent Vault is a bit clunky to work with and we’d wished to have more time to smoothen the developer experience around it, particularly around the configuration setup for agents to start proxying requests through it; the AI movement is pulling away at warp speed and we figured it would be best to open source the technology and work with the community to make gradual improvements for it to work seamlessly across all agentic use cases since each has its own nuances.

Design Architecture

When designing Agent Vault, the first thing we considered was how agents typically interacted with external services in and out of sandboxed environments; the reality was that there were too many options: API, CLI, SDK, MCP. In building the proxy vault, we could’ve architectured another “MCP gateway” (spoiler: we did with Agent Sentinel) but that would’ve only worked for agents using MCP whereas an agent could use any one of the other three methods to invoke external services. There had to be another way, an interface-agnostic solution, to broker credentials for outbound traffic.

The realization was that whether an agent calls an API, shells out via a CLI, uses an SDK, or invokes an MCP tool, every request eventually becomes an outbound HTTPS connection. Interfaces diverge at the top of the stack; they converge at the bottom. The cleanest place to broker credentials is below the interface, at the one layer every agent’s traffic actually shares: HTTPS itself.

Operating Below the Interface

So instead of introducing yet another interface-specific abstraction like an SDK, Agent Vault operates one layer lower as a local forward proxy that the agent points to via HTTPS_PROXY. This is just an environment variable that you set and most runtimes and tools already recognize. In fact, It’s the same mechanism that curl, Python, Node, Go, and most HTTP clients have supported for years.

Once configured, every outbound request made by the agent flows through the vault regardless of how it was initiated. Note that setting this environment variable alone does not guarantee that traffic flows through the proxy since the agent can unset it and is just a measure used to guide compliant clients; in a full deployment, the network must be locked down so that all outbound traffic is forced through Agent Vault.

How Requests Actually Flow

When an agent attempts to reach a service like api.stripe.com, its HTTP client establishes a tunnel to Agent Vault using HTTP CONNECT. In a traditional proxy, that tunnel remains opaque, simply forwarding encrypted traffic. That model breaks down for credential brokering because there’s no way to inject headers without seeing the request.

To solve this, Agent Vault terminates TLS. Using a locally trusted certificate authority, it presents itself as the upstream service, allowing the agent to complete a secure connection against it and send the request in plaintext over the tunnel.

At that point, Agent Vault can match the request, strip any credentials the agent attached, inject the correct credential from its encrypted store, and establish a new TLS connection to the real upstream with proper verification. The MITM architecture implies that Agent Vault can be extended with firewall-like features in the future to analyze and act on requests at the parameter level.

The response flows back through the same path, and from the agent’s perspective nothing has changed; it issued a normal HTTPS request and received a normal response. The difference is that it never handled the credential in the first place.

Why This Works

Because this happens below the application layer, the integration surface collapses down to almost nothing while policy becomes consistent everywhere. Allowlisting, rate limiting, audit logging, and per-agent credential scoping can be enforced uniformly across APIs, CLIs, SDKs, and any other interface the agent uses, without requiring each of those layers to understand secrets.

There are a few details that make this practical. The proxy authenticates requests via a session token during the CONNECT handshake so that every request is scoped to a specific agent context. The upstream destination is fixed at connection time, preventing mid-request redirection, and all outbound connections from the proxy are re-established with standard TLS verification. The certificate authority used for interception is treated as sensitive material and protected under the same model as the credentials themselves.

All of this comes back to a simple idea: if an agent can’t be trusted with credentials, it shouldn’t have them.

Give it a Spin

Agent Vault is launching today in research preview and is open source. We are releasing it at this stage because we’d love to get feedback from the engineers who are going to live with this problem.

You should note that Agent Vault is an experimental service and we expect to make significant updates to the form factor, ergonomics, security, and scalability of the proxy in the following months. To name a few items on the immediate roadmap: We’d like to continue reducing friction both for self-hosting Agent Vault and integrating it with different agent and agent orchestration use cases, improve the security of the project, as well as add more credential and firewall capabilities to make it fully featured.

All in all, we believe credential brokering is the right next step for how secrets management should be done for agents and encourage the community to get involved by exploring the repo, reading the docs, and contributing to the codebase.

Separately, if your organization is evaluating credential security for agent deployments that need production-grade reliability and enterprise support, feel free to reach out to our team and we’d be happy to walk you through what we do have in store for the commercial path.